Autonomous systems face an infinite variety of edge cases in the physical world. LexData provides the continuous perception validation that keeps robotic navigation, manipulation, and decision-making reliable — even when environments change.

Every new environment, lighting condition, object placement, and human interaction creates a potential failure mode. You can't train for every scenario — you need a system that adapts.

Sensor calibration drifts. Cameras get dirty. Lighting changes. Your robot's perception slowly degrades and nobody notices until it misses a pallet or misidentifies an obstacle.

Crowd-sourced annotation with inconsistent quality introduces noise into safety-critical perception systems. A mislabeled pedestrian in AV training data isn't a data quality issue — it's a safety risk.

Pixel-level semantic segmentation, LiDAR point cloud annotation, sensor fusion labeling, and temporal event tracking for complex perception tasks. Expert annotators — not crowd-sourced gig workers.

Build spatial understanding from flat labels. Map object relationships, track movement patterns, identify zero-day anomalies that standard training data misses.

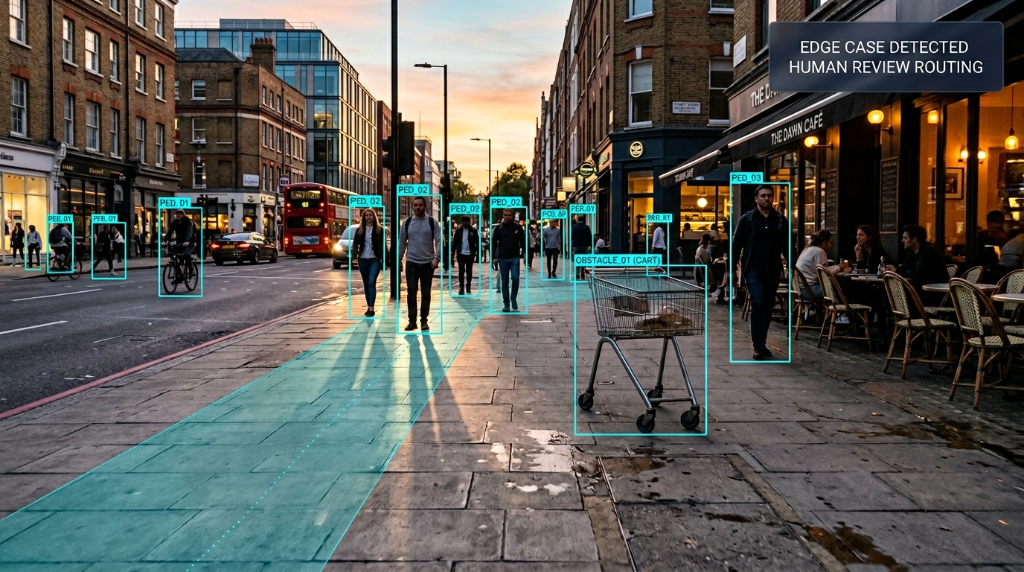

Continuously validate perception performance in production. Detect when sensor drift or environmental changes degrade navigation. Route failures back for retraining before they cause incidents.

Synchronized annotation for camera, LiDAR, and radar data streams.

Identifying and labeling corner cases for path planning algorithms.

Precise 3D object pose estimation for robotic arms.

Dynamic updating of facility maps for AMR fleets.

Safety-critical object classification in crowded environments.

Monitoring changes in physical spaces over time.

Flat bounding boxes failed to provide spatial context for autonomous material handling in shifting environments.

Deployed pixel-level semantic segmentation and temporal event tracking for high-fidelity spatial datasets.

Enabled autonomous systems to adapt to changing environments safely. Reduced manual overrides.

"Full-time domain experts. Not crowd-sourced gig workers. Every label is accountable."

"Continuous perception validation in production, not just training data delivery."

"99%+ accuracy with human verification for safety-critical systems."

"Proven with Bonsai Robotics for autonomous outdoor navigation."